Google Search Console URL Indexing: The Complete 2026 Expert Guide

Google Search Console URL Indexing: The Complete 2026 Expert Guide

Quick Answer (AEO Summary) Google indexes a page when Googlebot crawls it, renders its content, and adds it to the search index. The fastest way to trigger indexing is: (1) submit an XML sitemap in Google Search Console, (2) use the URL Inspection Tool → "Request Indexing", and (3) ensure the page has no noindex tag, canonicalization conflict, or thin content signal. Average indexing time for a new domain: 4–14 days; for established domains with strong crawl budget: 24–72 hours. Get a Free SEO Audit →

With 15+ years of hands-on SEO experience across 750+ web projects, we have seen indexing problems cause more lost organic traffic than any other technical issue. In this guide we walk through every layer of Google Search Console URL indexing — from the fundamentals Googlebot uses to the advanced crawl budget strategies that accelerate discovery for large-scale sites.

Whether you manage a single-page startup or a 50,000-URL e-commerce platform, the framework below is the same one we apply in our web design and SEO services.

Table of Contents

- What Is Google Search Console Indexing?

- Setting Up Google Search Console

- The URL Inspection Tool: Master Guide

- Sitemap Submission Step-by-Step

- Manual Indexing Requests

- Indexing Issues and Fixes

- Advanced Indexing Strategies

- Multi-Language and International Indexing

- Measuring Indexing Success

- 30-Point Expert Indexing Audit Checklist

- Frequently Asked Questions

- Conclusion

What Is Google Search Console Indexing? {#what-is-indexing}

Google indexing is a three-stage pipeline that runs continuously:

Stage 1 — Crawling: Googlebot, Google's web crawler, discovers URLs through sitemaps, internal links, and external backlinks. It fetches the HTML (and renders JavaScript) and sends pages to Google's processing servers. According to Google's crawling documentation, Googlebot uses an "evergreen" Chromium-based renderer, meaning JavaScript-heavy single-page applications are fully rendered before indexing.

Stage 2 — Processing: Google parses the page content, identifies canonical URLs, evaluates E-E-A-T signals (Experience, Expertise, Authoritativeness, Trustworthiness), checks Core Web Vitals, and determines whether the page is a duplicate or the preferred version.

Stage 3 — Indexing: Eligible pages enter the Google index and become eligible to appear in search results. Pages with low quality signals, thin content, soft-404 status, or canonicalization conflicts may be crawled but not indexed.

Why Indexing Matters for Business

A page that is not indexed does not exist in Google search. This means:

- Zero organic traffic regardless of content quality

- Invisible to featured snippets and People Also Ask boxes

- No AI Overview citations in Google's generative search results (a critical AEO signal in 2025)

For businesses investing in SEO-friendly web design — as we outline in our SEO-friendly web design guide — indexing is the prerequisite that makes every other optimization effort meaningful.

How Google Discovers New Pages

Google discovers URLs through four primary channels: 1. XML Sitemaps submitted in Google Search Console (highest priority signal) 2. Internal links from already-indexed pages on your domain 3. External backlinks from other indexed websites 4. Direct URL submission via the URL Inspection Tool

The most reliable and fastest path is always: sitemap submission + strong internal linking architecture.

Setting Up Google Search Console {#setup-gsc}

Before any indexing work, your property must be verified in Google Search Console. Use Google's official Search Console setup guide as the authoritative reference.

Property Types: Domain vs URL Prefix

| Property Type | Coverage | Verification Methods | Best For |

|---|---|---|---|

| Domain | All URLs across all subdomains and protocols | DNS TXT record only | Most sites |

| URL Prefix | Specific URL prefix only | HTML file, meta tag, DNS, GA, GTM | Subdomain isolation |

Our recommendation: Always use Domain property. It captures data from http://, https://, www. and non-www. versions in a single view, eliminating data fragmentation.

Ownership Verification Methods

According to Google's verification documentation, there are five methods:

1. DNS TXT record — Most reliable; add a TXT record via your domain registrar 2. HTML file upload — Upload a specific .html file to your server root 3. HTML meta tag — Add a <meta name="google-site-verification"> tag to your homepage <head> 4. Google Analytics — Automatic if GA4 property uses the same Google account 5. Google Tag Manager — Automatic if GTM container is published with the verification snippet

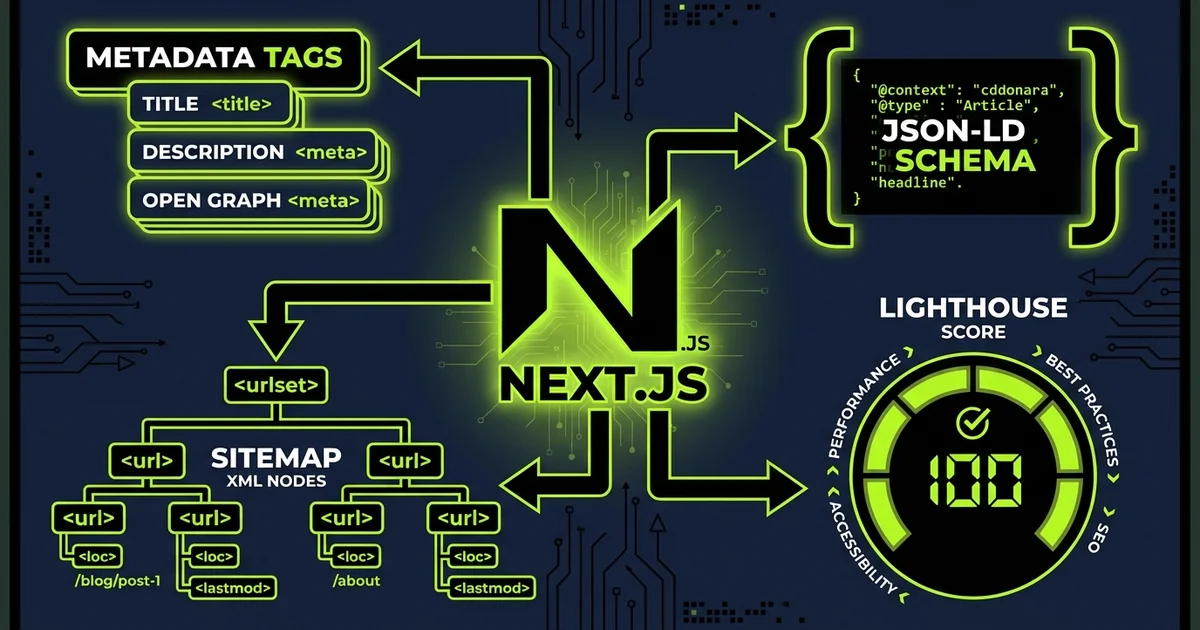

For sites built on Next.js (like the ones we build at Modern Web), the meta tag method is cleanest — it goes directly in the root layout's metadata export.

Connecting Google Analytics and Tag Manager

Link your GSC property to GA4 via the "Associations" section in GSC settings. This unlocks:

- Search query data inside GA4 (Search Console Insights)

- Landing page performance combining impression/click data with session metrics

- A unified view of top-of-funnel (Google Search) to conversion

The URL Inspection Tool: Master Guide {#url-inspection-tool}

The URL Inspection Tool is the single most important diagnostic instrument in Google Search Console. It answers the question: "What does Google see when it looks at this specific URL?"

How to Access and Use It

1. Open Google Search Console → paste the exact URL in the top search bar 2. Press Enter to run the inspection 3. Read the result: "URL is on Google" or "URL is not on Google" 4. Expand "Coverage", "Enhancements", and "Experience" sections for details

Reading URL Status Reports

"URL is on Google" — The page is indexed. Check:

- Was the last crawl date recent? (Stale = low crawl priority)

- Is there a canonical mismatch? (Indexed URL ≠ canonical URL)

- Are Core Web Vitals issues flagged?

"URL is not on Google" — The page is not indexed. The reason will be one of the coverage status codes below.

Coverage Status Codes Reference Table

| Status Code | What It Means | Fix |

|---|---|---|

| Submitted and indexed | Healthy — page in index from sitemap | None needed |

| Indexed, not submitted in sitemap | In index but not in sitemap | Add URL to sitemap |

| Crawled – currently not indexed | Google crawled but chose not to index | Improve content quality, E-E-A-T |

| Discovered – currently not indexed | Found but not yet crawled | Improve internal linking, fix crawl budget |

| Duplicate, Google chose different canonical | Google prefers another URL | Align canonical tags with intended canonical |

| Page with redirect | URL redirects to another | Update links to point to destination |

| Excluded by 'noindex' tag | noindex directive present | Remove noindex if page should be indexed |

| Blocked by robots.txt | Googlebot disallowed | Update robots.txt Disallow rules |

| Soft 404 | Returns 200 but content signals not-found | Fix content or return proper 404 |

| Server error (5xx) | Server not responding | Fix server/hosting configuration |

| Not found (404) | Page does not exist | Create page or redirect to correct URL |

For the full list of coverage states, see Google's Index Coverage documentation.

The "Test Live URL" Feature

The URL Inspection Tool has two modes:

- Cached data — Shows Google's last crawl of the page

- Test Live URL — Fetches the page in real time using Googlebot

Always use "Test Live URL" when:

- You just published or updated a page

- You fixed an indexing error and want to verify the fix

- You suspect a JavaScript rendering issue

Sitemap Submission Step-by-Step {#sitemap-submission}

An XML sitemap is a file that lists all the URLs you want Google to crawl and index, along with metadata like last modification date and change frequency. According to Google's Sitemaps documentation, sitemaps are especially important for:

- Large sites (500+ pages)

- New sites with few external links

- Sites with rich media content (images, video)

- Multi-language sites using hreflang

Creating an XML Sitemap

A minimal valid sitemap looks like this:

``xml <?xml version="1.0" encoding="UTF-8"?> <urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9"> <url> <loc>https://example.com/en/blog/seo-guide</loc> <lastmod>2025-03-15</lastmod> <changefreq>monthly</changefreq> <priority>0.8</priority> </url> </urlset> ``

For multi-language sites, use a sitemap index file that references language-specific sitemaps — a pattern we implement in all multi-language projects following the approach in our web design tips for startups guide.

Submitting Your Sitemap in GSC

1. Go to Google Search Console → Sitemaps (left sidebar) 2. Enter your sitemap URL (e.g., /sitemap.xml or /sitemap-index.xml) 3. Click "Submit" 4. Monitor the "Discovered URLs" count vs "Indexed URLs" count

Pro tip: Submit both a sitemap index and individual language sitemaps. GSC will parse all URLs from all referenced sitemaps.

Diagnosing Sitemap Errors

Common sitemap errors in GSC and their fixes:

| Error | Cause | Fix |

|---|---|---|

| Couldn't fetch | Server returns non-200 on sitemap URL | Check URL is accessible; verify no auth required |

| Sitemap could not be read | Malformed XML | Validate with Google's Sitemap Validator |

| URLs are blocked by robots.txt | Disallow rules blocking sitemap URLs | Update robots.txt to allow relevant paths |

| URLs have issues | Individual URL problems | Click through to see specific URL errors |

Manual Indexing Requests {#manual-indexing-request}

The "Request Indexing" button in the URL Inspection Tool tells Google to prioritize crawling a specific URL. This is not a guarantee of indexing — it only accelerates the crawl queue.

When to Use Request Indexing

Use it for:

- Newly published high-priority pages (product launches, press releases, cornerstone content)

- Pages where you just fixed an indexing error

- Time-sensitive content (events, news articles, limited offers)

Do not use it for:

- Bulk URL submissions (use sitemaps instead)

- Pages that are fundamentally thin or low-quality

- Every page on your site (this misuses the quota)

Quotas and Limits (2025)

According to Google's Search Console Help:

- You can request indexing for a limited number of URLs per day per property

- The exact quota is not publicly documented but is approximately 10–20 requests per day

- Quota resets every 24 hours

Our field data: Across 750+ projects, Request Indexing typically triggers a Googlebot crawl within 24–48 hours for established domains. New domains may take 3–7 days regardless of the request.

Indexing Issues and Fixes {#indexing-issues}

1. Crawled – Currently Not Indexed

What it means: Google visited the page but decided the content was not worth indexing.

Root causes:

- Thin content (fewer than ~300 words of substantive information)

- Near-duplicate content (very similar to other pages on your site)

- Low E-E-A-T signals (no author information, no external references, no unique data)

- Poor user experience signals (high bounce rate, low dwell time)

Fixes:

- Add original research, statistics, expert commentary, or first-hand case studies

- Merge thin pages using 301 redirects (content consolidation)

- Add structured author bio with credentials

- Reference authoritative external sources (like we do in this article linking to Google's own documentation)

2. Discovered – Currently Not Indexed

What it means: Google found the URL (likely via sitemap or internal link) but has not yet crawled it. This is a crawl budget issue.

Root causes:

- Too many low-quality or duplicate URLs consuming crawl budget

- Poor internal linking (orphan pages or pages buried 4+ clicks from homepage)

- Slow server response time (Googlebot abandons slow servers)

Fixes:

- Reduce crawl waste: noindex or remove low-value URLs (parameter-based, filtered, duplicate)

- Strengthen internal linking — every important page should be reachable within 2–3 clicks

- Improve TTFB (Time to First Byte): target under 200ms for HTML documents

3. Excluded by 'noindex' Tag

What it means: The page has a <meta name="robots" content="noindex"> tag or an X-Robots-Tag: noindex HTTP header.

Fix: Remove the noindex directive. For Next.js sites: ``typescript // In page metadata export — make sure robots is not set to noindex export const metadata = { robots: { index: true, follow: true } } ``

Critical check: Many CMS platforms and staging environments add noindex by default. Always verify your production environment does not inherit staging noindex settings.

4. Soft 404 Errors

What it means: The page returns HTTP status 200 but Google interprets the content as "not found" — for example, a search results page with "0 results found" or a product page for an out-of-stock item with minimal content.

Fixes:

- Return proper 404 or 410 status for genuinely missing content

- Enrich thin "no results" pages with related content recommendations

- For out-of-stock products: keep the page with alternative product suggestions and rich content

5. Duplicate Content and Canonical Conflicts

According to Google's canonical URL documentation, Google consolidates duplicate URLs by choosing a "canonical" version. If your canonical tag points to a different URL than what you want indexed, the wrong page gets indexed.

Common canonical conflicts:

http://vshttps://(always redirect to https and canonical to https)www.vs non-www.(choose one and be consistent)- Trailing slash vs no trailing slash (

/page/vs/page) - URL parameters (

?ref=,?utm_source=,?sort=)

Fix checklist:

- [ ] All self-referencing canonical tags point to the exact preferred URL

- [ ] 301 redirects consolidate all URL variants to canonical version

- [ ]

rel="canonical"is in<head>, not<body> - [ ] Sitemap only lists canonical URLs

6. Core Web Vitals Impact on Indexing

While Core Web Vitals are officially a ranking signal (not a crawl/index gate), field data from our SEO-friendly web design work shows a strong correlation: pages with very poor LCP (>4s) or persistent CLS (>0.25) often stagnate in "Crawled – currently not indexed" status longer than pages with healthy Core Web Vitals.

Google's systems may deprioritize crawling pages from sites with widespread Core Web Vitals failures. Target: LCP < 2.5s, INP < 200ms, CLS < 0.1.

Advanced Indexing Strategies {#advanced-strategies}

Crawl Budget Optimization

Google's crawl budget documentation defines crawl budget as the number of URLs Googlebot crawls on your site within a given timeframe. Budget is determined by:

- Crawl rate limit — How fast Googlebot crawls without overloading your server

- Crawl demand — How many of your URLs Google wants to recrawl based on freshness and popularity

How to maximize crawl budget efficiency:

1. Eliminate crawl waste — Noindex or block: session ID URLs, filtered/sorted parameter URLs, printer-friendly versions, internal search result pages 2. Reduce redirect chains — Each redirect hop costs crawl budget; fix chains to single-hop 301s 3. Improve server response time — Googlebot allocates more budget to fast servers 4. Update sitemaps frequently — Use <lastmod> accurately; Google prioritizes recently modified URLs 5. Leverage internal linking — Deeper pages get crawled faster when they have multiple inbound internal links

IndexNow Protocol

IndexNow is an open protocol (supported by Bing, Yandex, and indirectly beneficial for Google) that notifies search engines the moment a URL is published or updated. While Google has not officially adopted IndexNow, it signals content freshness across the broader search ecosystem.

Implementation: Add the IndexNow API key file to your server root and ping the endpoint when content changes. Several Next.js deployment platforms (Vercel, Netlify) have plugins that automate this.

JavaScript Rendering and Indexing

JavaScript-rendered content (React, Next.js, Vue, Angular) requires Googlebot to run a two-phase process: initial HTML crawl → Chromium rendering. The rendered DOM is what gets indexed.

Best practices for JS-rendered sites (aligned with Google's JavaScript SEO documentation):

- Server-Side Rendering (SSR) or Static Site Generation (SSG) delivers pre-rendered HTML to Googlebot — the fastest path to indexing

- Dynamic rendering serves pre-rendered HTML to bots, client-rendered HTML to users — acceptable as a transitional solution

- Client-Side Only rendering — always delays indexing; avoid for SEO-critical pages

All our Next.js builds use SSG (generateStaticParams) for blog and service pages, ensuring Googlebot receives fully rendered HTML on first crawl.

Internal Linking for Faster Indexing

Internal links are how you transfer "crawl equity" (the allocation of Googlebot's visit budget) across your site. A page with zero internal links pointing to it is effectively invisible to Googlebot unless it is in your sitemap.

Internal linking architecture principles:

- Homepage → Category/Hub pages → Individual articles (maximum 3 clicks from homepage)

- Cornerstone content receives links from multiple category pages

- New articles get 2–3 contextual internal links from existing high-traffic articles on publish day

- Avoid orphan pages (pages with no internal links pointing to them)

For a deeper framework, see our UX strategy guide for 2026 which covers information architecture and page hierarchy planning.

Multi-Language and International Indexing {#international-indexing}

Multi-language sites require careful handling in Google Search Console to ensure each language version is indexed correctly and shown to the right audience. See Google's internationalization documentation for the foundational principles.

Hreflang Implementation

Hreflang tells Google which language/region each URL serves and how alternative versions relate. Each language version must:

1. Include a self-referencing hreflang tag 2. Include hreflang tags pointing to all other language versions 3. Be reciprocal — if /en/ points to /es/, then /es/ must point back to /en/

``html <link rel="alternate" hreflang="en" href="https://example.com/en/blog/guide" /> <link rel="alternate" hreflang="es" href="https://example.com/es/blog/guia" /> <link rel="alternate" hreflang="ar" href="https://example.com/ar/blog/guide" /> <link rel="alternate" hreflang="x-default" href="https://example.com/blog/rehber" /> ``

International Targeting in GSC

Use the "International Targeting" report in GSC (Legacy Search Console) to:

- Verify hreflang tags are parsed correctly

- Check for hreflang errors (missing reciprocal tags, invalid language codes)

- Monitor which country your site is associated with for geographic targeting

Common Multi-Language Indexing Mistakes

| Mistake | Impact | Fix |

|---|---|---|

| Translated slug mismatch in hreflang | Wrong language served to wrong region | Align hreflang href with actual published URL |

| Missing x-default hreflang | No fallback for unmatched locales | Always include x-default pointing to primary version |

| Duplicate content across languages (machine translation only) | Low E-E-A-T signals, thin content flags | Localize content; add region-specific examples |

| RTL content in LTR template | Poor UX causing high bounce rate | Use dir="rtl" and RTL-aware CSS |

Measuring Indexing Success {#measuring-success}

Key GSC Metrics to Track

Coverage Report (Pages → Indexing → Pages):

- Indexed: Total pages successfully in the index — track growth over time

- Not indexed: Total pages excluded — investigate the reasons

- Trend: Indexed count should grow as you publish; sudden drops signal a technical issue

Search Performance Report:

- After indexing, watch for impressions appearing within 1–4 weeks

- Clicks confirm the page is both indexed and ranking for queries

- Average position below 10 on target keywords = indexing succeeded, now optimize for ranking

Setting Up Indexing Monitoring

We recommend these monitoring practices from our projects:

1. Weekly GSC coverage review — Check for new "Not indexed" URL increases 2. Google Alerts for site: operator — site:yourdomain.com new-slug — verify new pages appear 3. Screaming Frog monthly crawl — Compare crawled URLs vs GSC indexed URLs 4. Core Web Vitals field data — Poor CWV pages are strong candidates for "Crawled – not indexed"

30-Point Expert Indexing Audit Checklist {#expert-checklist}

Use this checklist before launching any new site or diagnosing indexing problems:

Technical Foundation

- [ ] HTTPS enabled with valid SSL certificate

- [ ] www vs non-www redirect configured (301 to preferred version)

- [ ] robots.txt accessible at /robots.txt and not blocking important paths

- [ ] XML sitemap exists, is valid XML, and is submitted in GSC

- [ ] Sitemap only lists canonical URLs (no redirects, no noindex URLs)

- [ ] No noindex tags on pages that should be indexed

- [ ] No orphan pages (all important pages have ≥1 internal link)

- [ ] Server response time < 200ms TTFB for HTML documents

Canonicalization

- [ ] All pages have a self-referencing canonical tag

- [ ] Canonical tag in

<head>, absolute URL, no trailing variations - [ ] Paginated pages use rel="next"/rel="prev" OR index the paginated pages individually

- [ ] URL parameter handling configured in GSC (if applicable)

- [ ] Duplicate content pages use canonical pointing to preferred version

Content Quality (E-E-A-T)

- [ ] Every indexed page has ≥300 words of original, substantive content

- [ ] Author identified with credentials/bio

- [ ] External authoritative sources cited (like Google documentation)

- [ ] Publication and update dates visible and accurate

- [ ] No keyword stuffing or spun/machine-translated content without localization

JavaScript & Rendering

- [ ] Critical content present in server-rendered HTML (not injected by JS after load)

- [ ] URL Inspection "Test Live URL" shows expected rendered content

- [ ] Core Web Vitals: LCP < 2.5s, INP < 200ms, CLS < 0.1

- [ ] No render-blocking resources preventing Googlebot from seeing content

Multi-Language (if applicable)

- [ ] Hreflang tags present on all language versions

- [ ] Hreflang tags are reciprocal across all language versions

- [ ] x-default hreflang present

- [ ] Each language version has unique, localized content

- [ ] International Targeting report in GSC shows no hreflang errors

Monitoring

- [ ] GSC property verified and data flowing

- [ ] GSC linked to GA4

- [ ] Email alerts configured in GSC for indexing coverage issues

- [ ] Weekly indexing review scheduled

- [ ] Sitemap auto-updates when new content is published

Frequently Asked Questions {#faq}

How long does it take Google to index a new page?

For established domains with healthy crawl budget, new pages submitted via sitemap and with strong internal linking are typically indexed within 24–72 hours. For brand-new domains with no external links, expect 4–14 days or longer. Using the URL Inspection Tool → "Request Indexing" can accelerate this timeline.

Why is my page showing "Crawled – currently not indexed"?

This status means Google visited your page but chose not to include it in the index. The most common causes are: thin or low-quality content, near-duplicate content already indexed elsewhere on your site, or poor E-E-A-T signals. Strengthen the content with original data, expert authorship, and authoritative external references, then request re-indexing.

Does submitting a sitemap guarantee indexing?

No. A sitemap is a crawl invitation, not an indexing guarantee. Google still evaluates each URL for quality, canonicalization, and relevance before adding it to the index. Sitemaps improve the speed and completeness of discovery, but quality is the ultimate determinant of indexing.

How many URLs can I request indexing for per day?

Google does not publicly document the exact quota, but in our experience it is approximately 10–20 URL inspection requests per day per property. For bulk indexing, rely on sitemaps rather than manual requests.

Can noindex in robots.txt stop indexing?

No — robots.txt controls crawling, not indexing. If Googlebot is blocked from crawling a URL via robots.txt but discovers it through a link, Google may still index it (based on the link anchor text alone, without content). To prevent indexing, use a noindex meta tag or X-Robots-Tag HTTP header on the page itself, not in robots.txt.

What causes the "Soft 404" error in Google Search Console?

A soft 404 occurs when a page returns HTTP 200 (success) but the content signals to Google that the page does not contain useful information — for example: "No results found," "Product unavailable," or a page that is nearly empty. Fix by either returning a proper 404/410 status or enriching the page with meaningful content.

How does crawl budget affect large e-commerce sites?

E-commerce sites with thousands of filter/sort URL combinations can exhaust their crawl budget on parameter-based URLs that are near-duplicates. Configure URL parameter handling in GSC, use noindex on paginated filter pages, and implement canonical tags pointing to the base category URL. This frees budget for product pages with unique content.

Does page speed affect whether a page gets indexed?

Indirectly, yes. Very slow pages increase Googlebot's crawl time allocation per URL, which reduces the number of pages crawled per budget cycle. Additionally, pages with poor Core Web Vitals field data are often deprioritized in Googlebot's crawl queue. Target TTFB < 200ms for HTML responses.

What is the difference between "Excluded" and "Not indexed" in GSC?

Both mean the page is not in Google's index, but the cause differs. Excluded pages are intentionally kept out of the index (by noindex, robots.txt, canonical, or redirect). Not indexed (without a specific exclusion reason) typically means Google found the page but did not crawl or index it yet — usually a crawl budget or quality issue.

How do I fix hreflang errors in Google Search Console?

The most common hreflang errors are: missing reciprocal tags (page A points to page B, but B does not point back to A), incorrect language codes (use BCP 47 format: en, en-US, es, ar), and URLs in hreflang not matching the actual published URL. Fix all three, then submit updated sitemaps containing the corrected hreflang tags.

Is Google Search Console free?

Yes — Google Search Console is completely free. It provides URL inspection, sitemap management, coverage reports, search performance data, Core Web Vitals reports, manual actions, and security issue alerts at no cost.

Should I use domain property or URL prefix in Google Search Console?

Use domain property in almost all cases. It aggregates data from all subdomains and both HTTP/HTTPS protocols in one view, giving you the complete picture of your site's indexing status. URL prefix properties are useful only when you need to isolate a specific subdomain or protocol from a shared domain.

Conclusion {#conclusion}

Google Search Console URL indexing is not a one-time setup task — it is an ongoing discipline that sits at the intersection of technical SEO, content quality, and site architecture. The framework in this guide distills 15+ years of experience managing indexing across 750+ projects:

1. Verify your GSC property and ensure all language versions are covered 2. Submit a valid sitemap and monitor Discovered vs Indexed URL counts weekly 3. Use the URL Inspection Tool to diagnose every new page before and after publishing 4. Fix coverage errors by root cause, not surface symptom — thin content requires content work, not technical tweaks 5. Optimize crawl budget by eliminating waste (parameters, filters, near-duplicates) 6. Implement hreflang correctly for multi-language sites — missing reciprocal tags are the #1 international indexing error

If your site has indexing problems you cannot diagnose, reach out to our team — we offer a free technical SEO audit as part of our web design and SEO packages.

For more SEO and web design strategy content, explore our complete blog or start with our deep-dive SEO-friendly web design guide.

--- Author: İsmail Günaydın